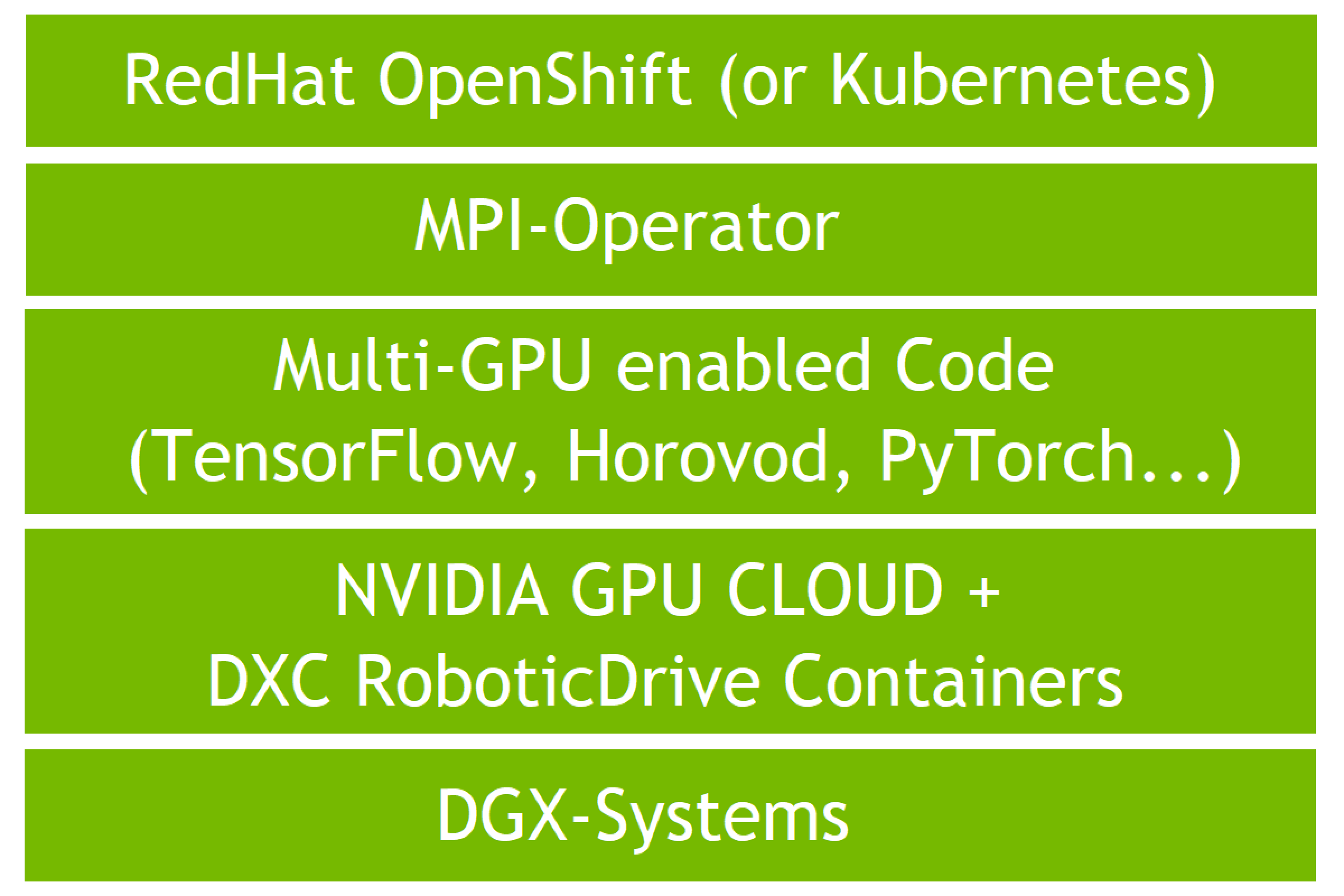

Validating Distributed Multi-Node Autonomous Vehicle AI Training with NVIDIA DGX Systems on OpenShift with DXC Robotic Drive | NVIDIA Technical Blog

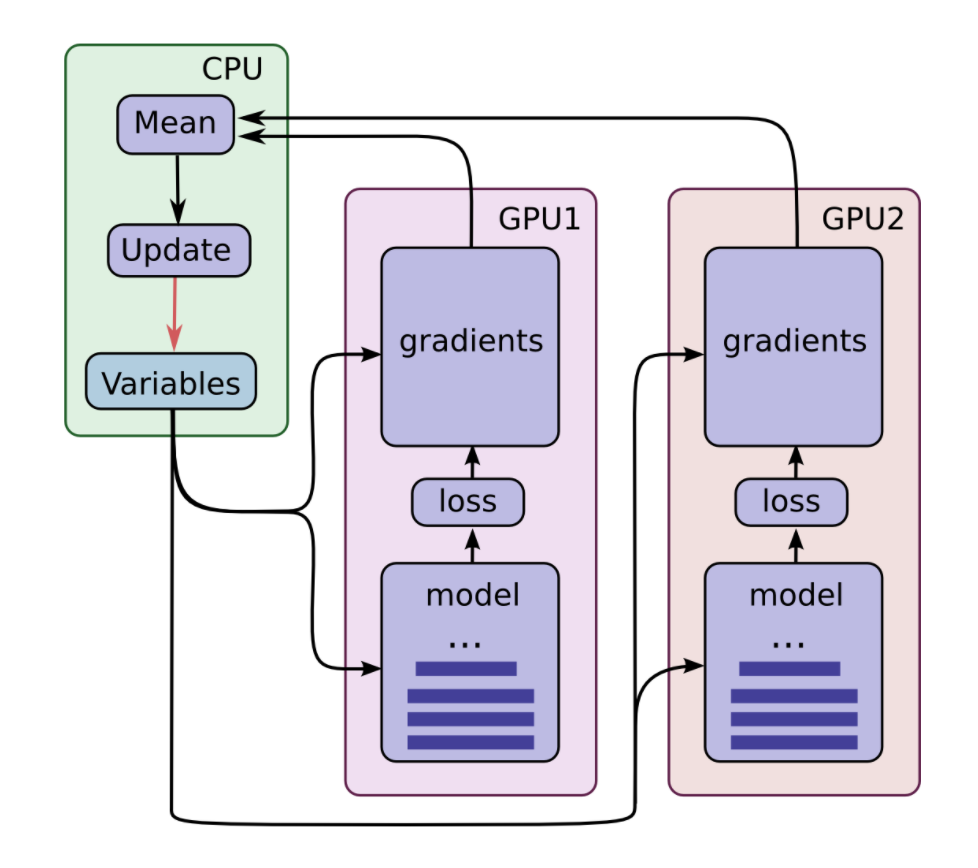

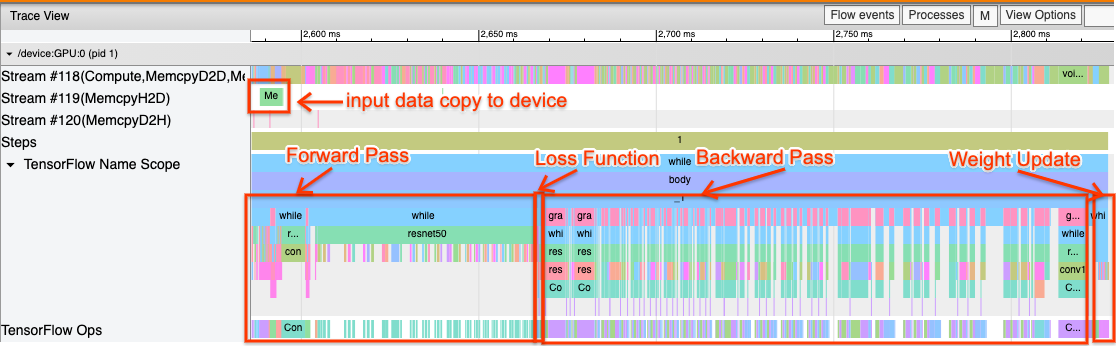

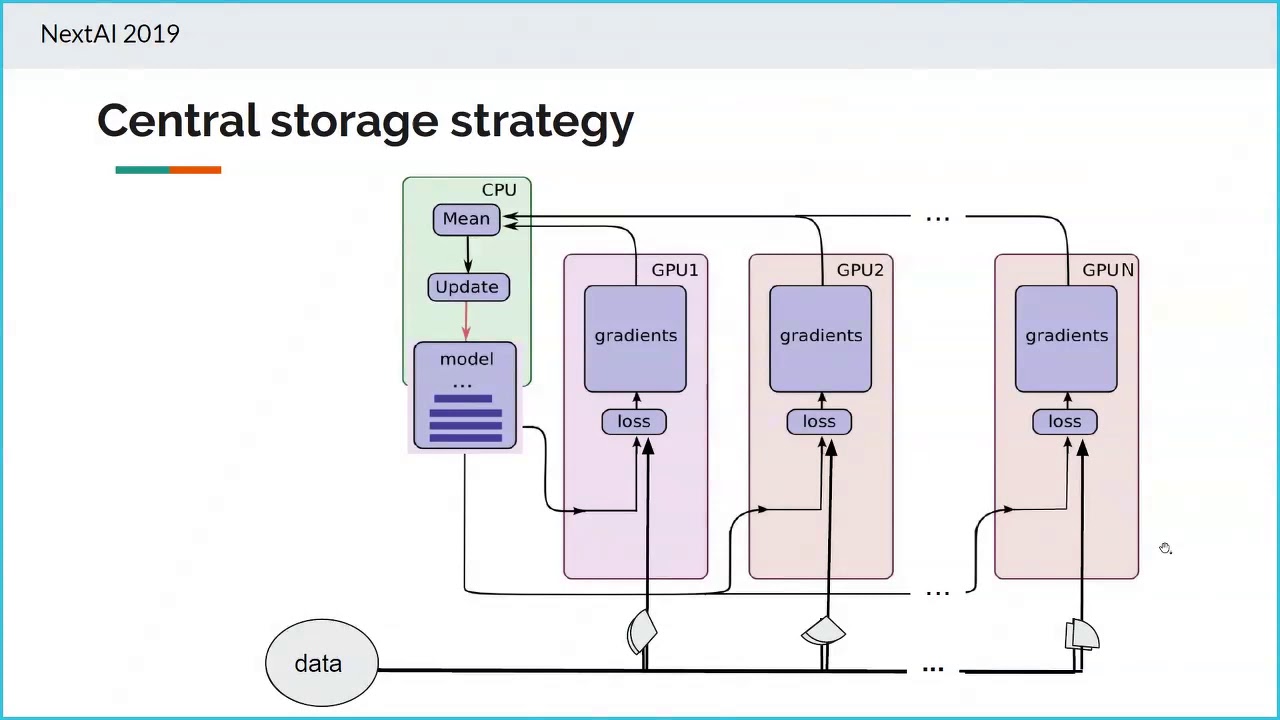

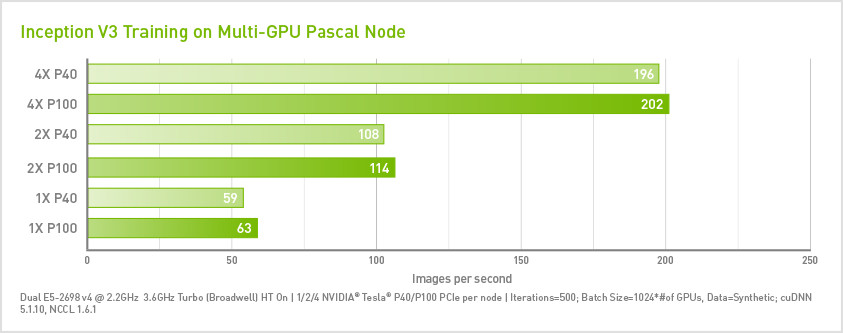

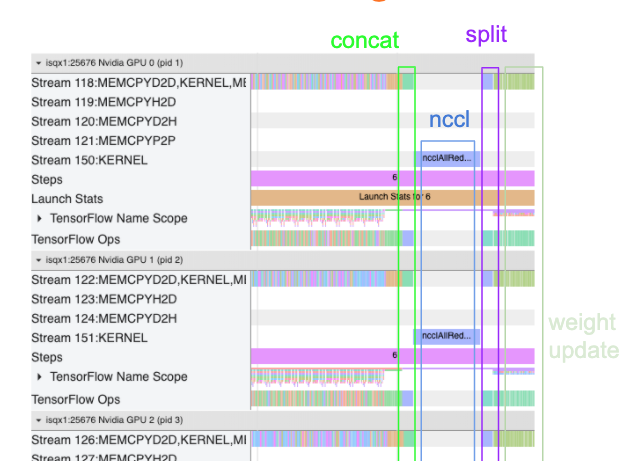

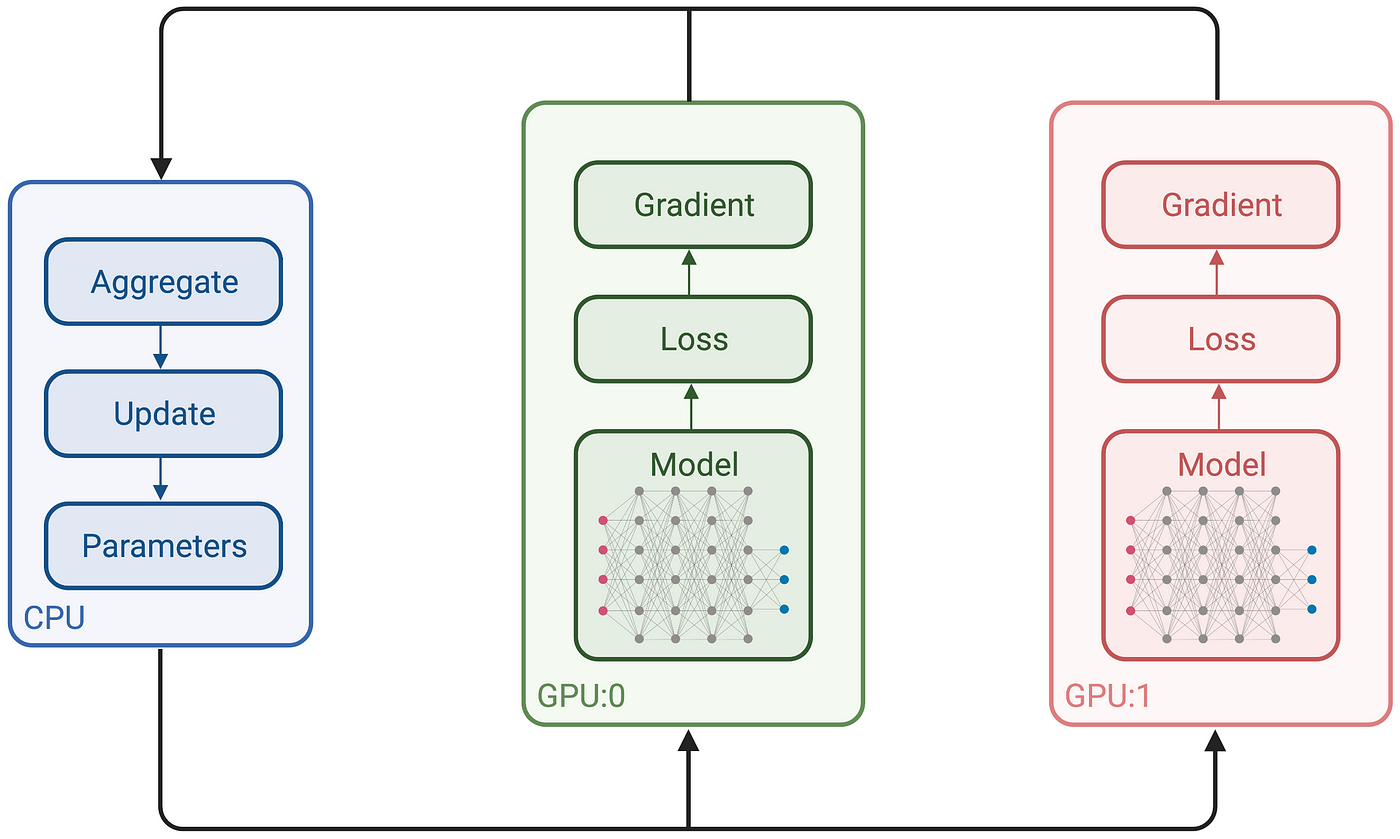

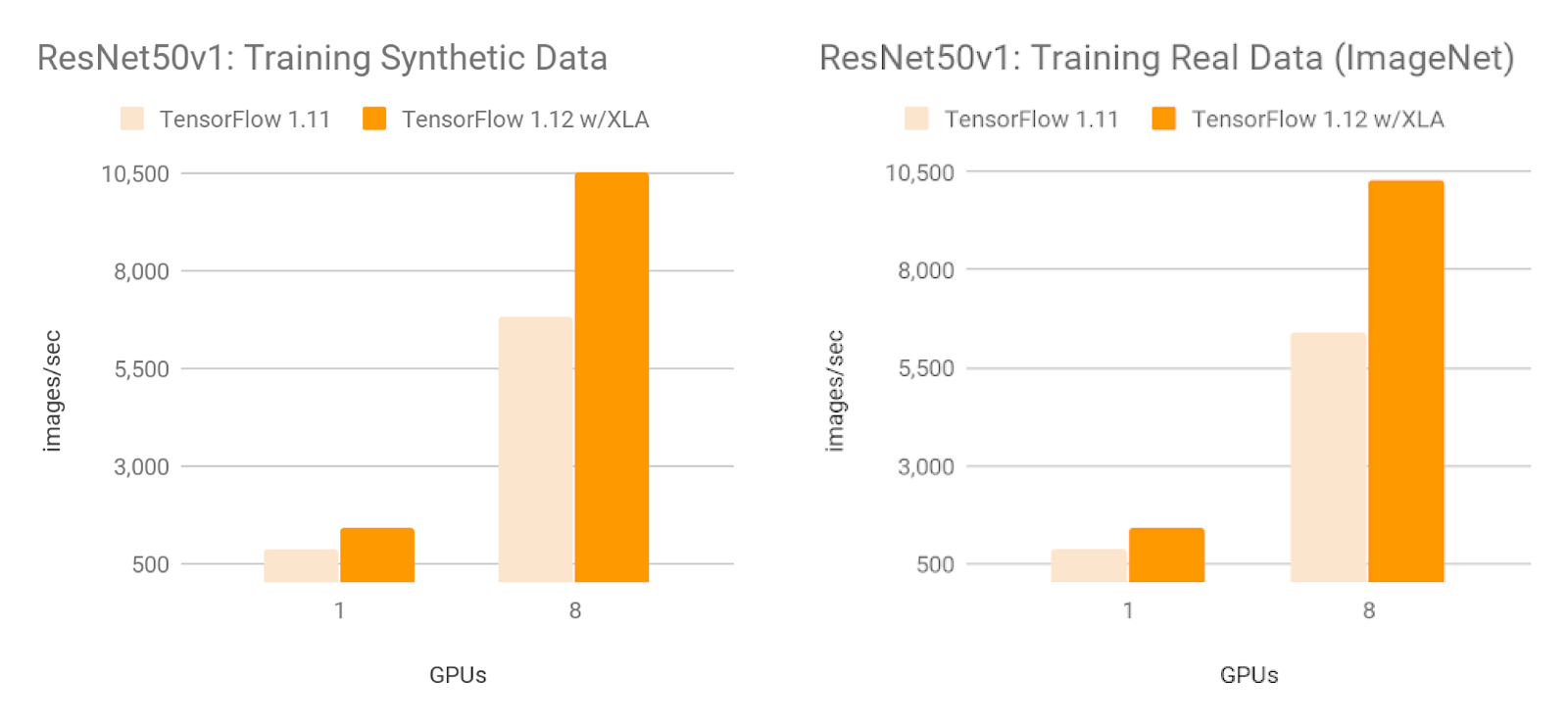

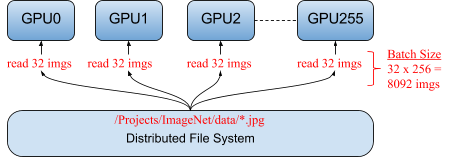

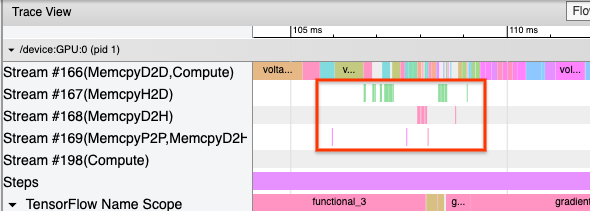

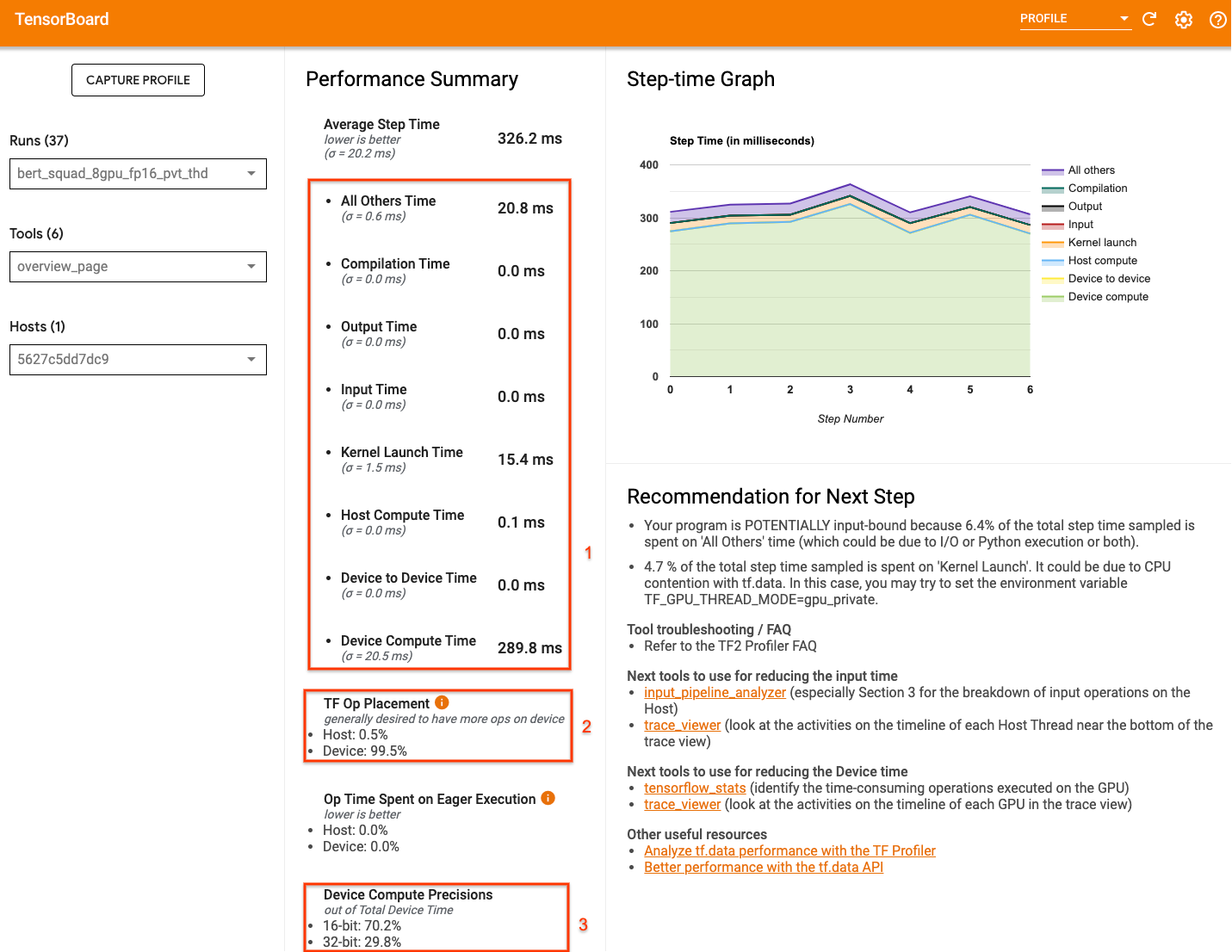

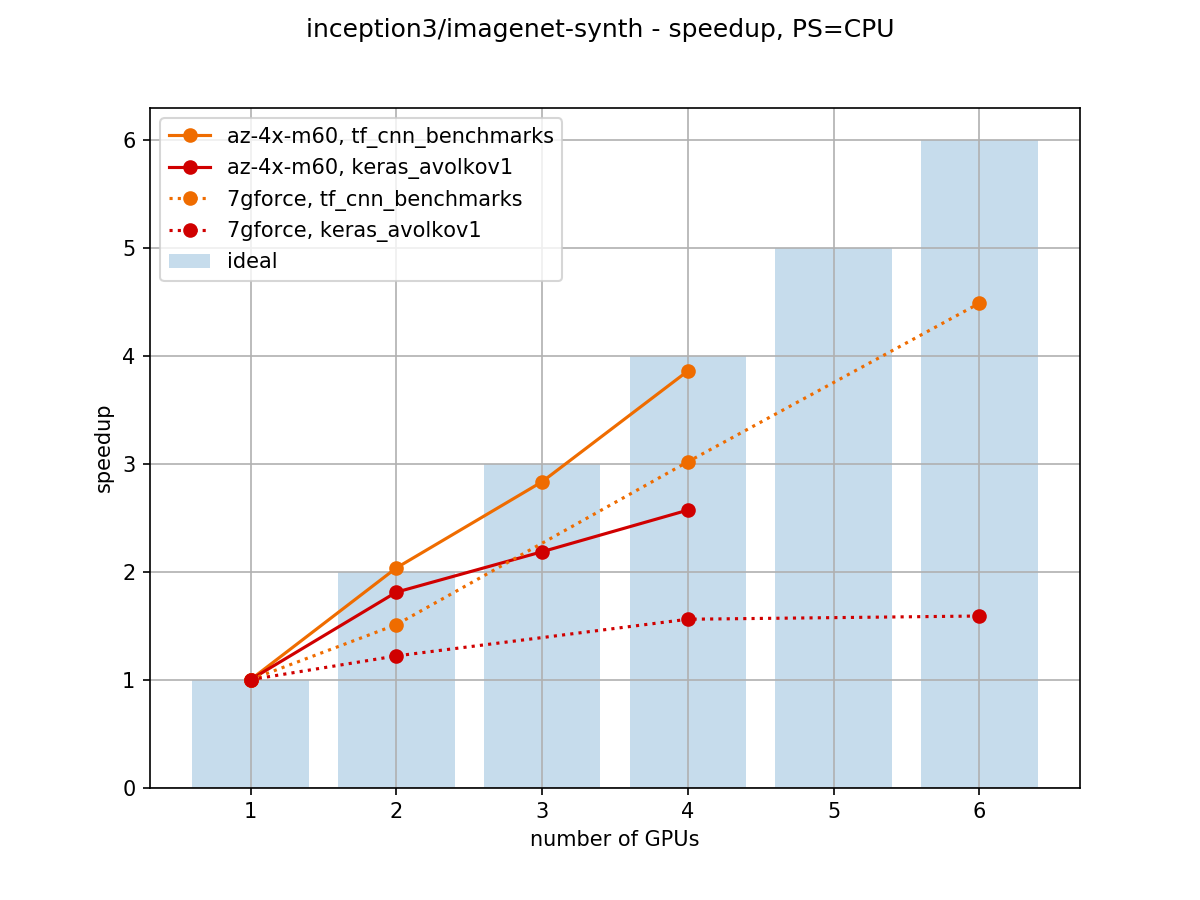

Towards Efficient Multi-GPU Training in Keras with TensorFlow | by Bohumír Zámečník | Rossum | Medium

GitHub - moritzhambach/CPU-vs-GPU-benchmark-on-MNIST: compare training duration of CNN with CPU (i7 8550U) vs GPU (mx150) with CUDA depending on batch size

Sharing GPU for Machine Learning/Deep Learning on VMware vSphere with NVIDIA GRID: Why is it needed? And How to share GPU? - VROOM! Performance Blog

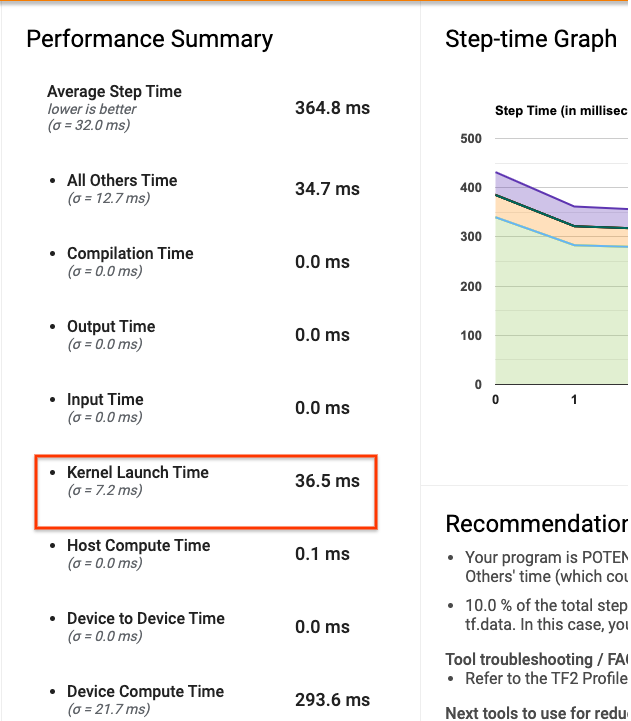

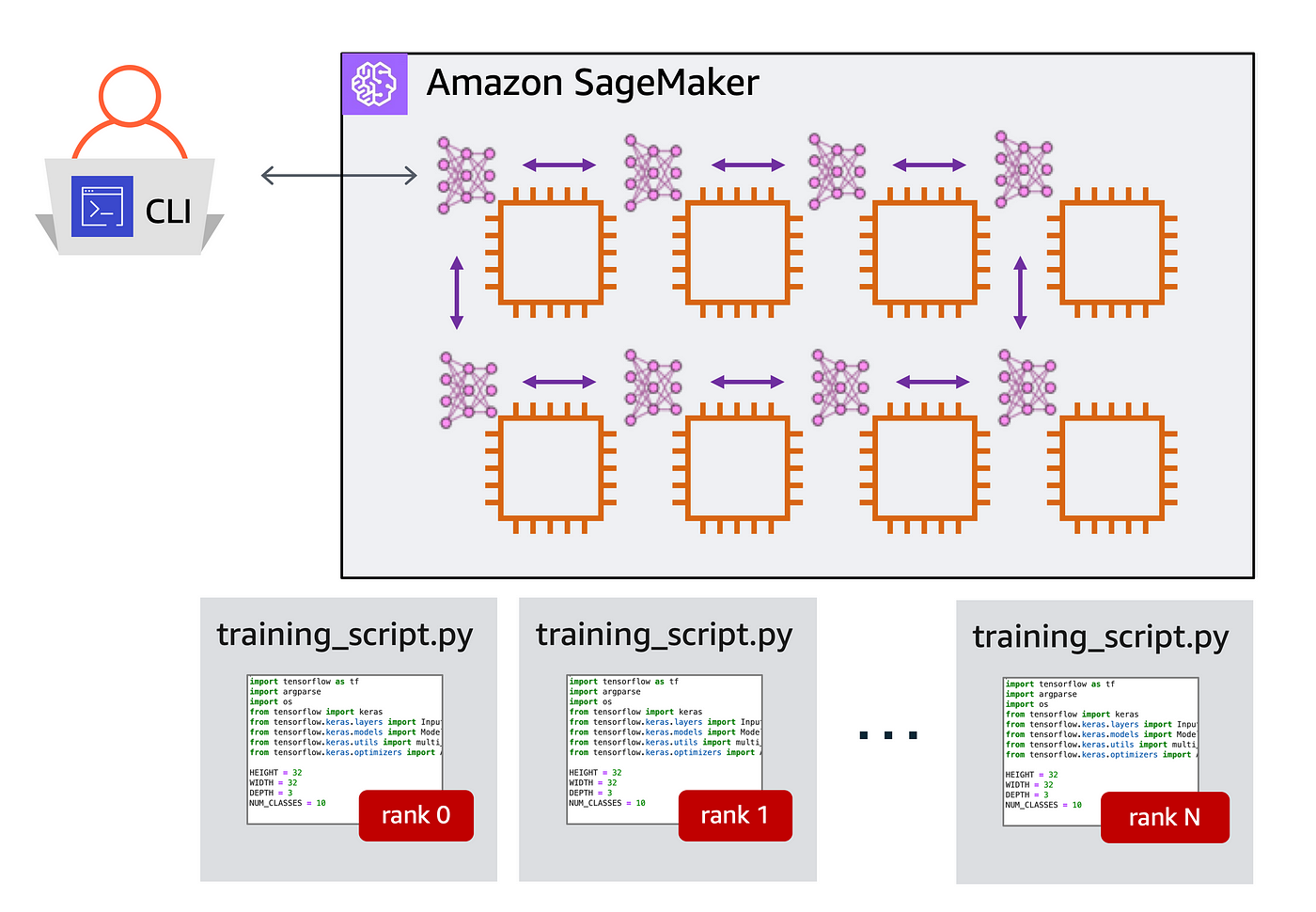

A quick guide to distributed training with TensorFlow and Horovod on Amazon SageMaker | by Shashank Prasanna | Towards Data Science